Scrapy 抓取网易云音乐评论 不需要API 速度快 【3】

2020-12-18 12:35

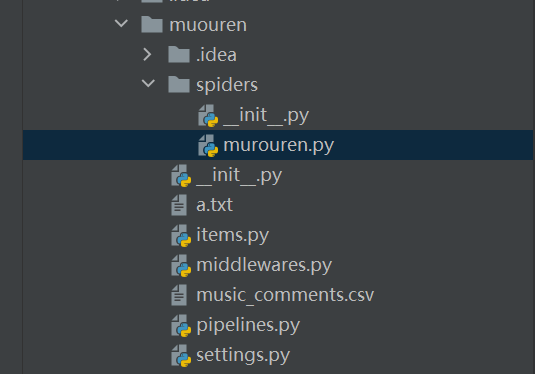

标签:mat headers als user false class 点赞 style 开关 首先在spiders下,创建muouren.py 然后middlewares.py 写入 然后在seetings里写入 还要在同一目录下,放入抓取好的IP a.txt 这样就可以快速抓取1000页的数据,之后的评论需要用到API。 Scrapy 抓取网易云音乐评论 不需要API 速度快 【3】 标签:mat headers als user false class 点赞 style 开关 原文地址:https://www.cnblogs.com/aotumandaren/p/13764211.html

import scrapy

import json

import time

class MyspiderSpider(scrapy.Spider):

name = "muou"

def start_requests(self):

urls = [‘http://music.163.com/api/v1/resource/comments/R_SO_4_1374051000?&offset=%s‘ % i for i in range(1, 1000)] # 抓取9页

for url in urls:

yield scrapy.Request(url=url, callback=self.parse)

def parse(self, response):

with open(‘music_comments.csv‘, ‘a‘, encoding=‘utf-8_sig‘) as f:

response = json.loads(response.text)

comments = response[‘comments‘]

for comment in comments:

# 用户名

user_name = comment[‘user‘][‘nickname‘].replace(‘,‘, ‘,‘)

# 用户ID

user_id = str(comment[‘user‘][‘userId‘])

# 评论内容

com = comment[‘content‘].strip().replace(‘\n‘, ‘‘).replace(‘,‘, ‘,‘)

# 评论ID

comment_id = str(comment[‘commentId‘])

# 评论点赞数

praise = str(comment[‘likedCount‘])

# 评论时间

date = time.localtime(int(str(comment[‘time‘])[:10]))

structed_date = time.strftime("%Y-%m-%d %H:%M:%S", date)

print(user_name, user_id, com, comment_id, praise, structed_date)

f.write(

user_name + ‘,‘ + user_id + ‘,‘ + com + ‘,‘ + comment_id + ‘,‘ + praise + ‘,‘ + structed_date + ‘\n‘)

class NovelUserAgentMiddleWare(object): #随即user_AGENT

def __init__(self):

self.user_agent_list = [

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1"

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1","Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3",

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.24 (KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24",

]

def process_request(self, request, spider):

import random

ua = random.choice(self.user_agent_list)

print(‘User-Agent:‘ + ua)

request.headers.setdefault(‘User-Agent‘, ua)

#也在middlewares 件中添加类

class NovelProxyMiddleWare(object): #随即IP

def process_request(self, request, spider):

proxy = self.get_random_proxy()

print("Request proxy is {}".format(proxy))

request.meta["proxy"] = "http://" + proxy

def get_random_proxy(self):

import random

with open(‘a.txt‘, ‘r‘, encoding="utf-8") as f:#打开IP的地址,前提这个目录下有#IP.txt

txt = f.read()

return random.choice(txt.split(‘\n‘))

DOWNLOADER_MIDDLEWARES = {

‘muouren.middlewares.NovelUserAgentMiddleWare‘: 544, #随即user

‘muouren.middlewares.NovelProxyMiddleWare‘: 543,#随即IP ImagesRename 换成自己的

}

CONCURRENT_REQUESTS = 100

CONCURRENT_REQUESTS_PER_DOMAIN = 100

CONCURRENT_REQUESTS_PER_IP = 100

COOKIES_ENABLED = False

RETRY_ENABLED = True #打开重试开关

RETRY_TIMES = 3 #重试次数

DOWNLOAD_TIMEOUT = 10 #超时

RETRY_HTTP_CODES = [429,404,403] #重试

上一篇:Win10使用命令行强制关闭软件

下一篇:C# 实现扫雷

文章标题:Scrapy 抓取网易云音乐评论 不需要API 速度快 【3】

文章链接:http://soscw.com/index.php/essay/37087.html