Python之酒店评论分词、词性标注、TF-IDF、词频统计、词云

2021-05-03 04:28

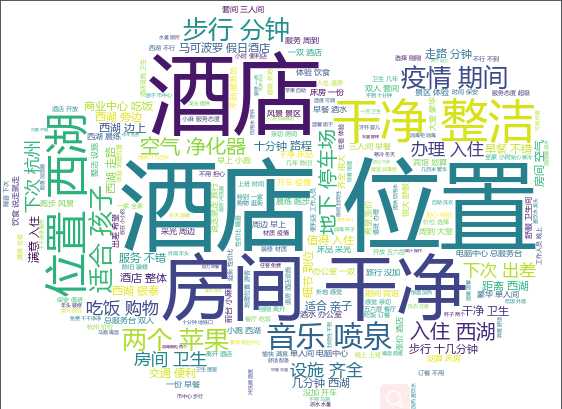

标签:eve 词性标注 dcl type image from 装修 oss min 1.jieba分词与词性标注 思路: (1)利用pandas读取csv文件中的酒店客户评论,并创建3个新列用来存放分词结果、词性标注结果、分词+词性标注结果 (2)利用jieba分词工具的posseg包,同时实现分词与词性标注 (3)利用停用词表对分词结果进行过滤 (4)将分词结果以20000条为单位写入txt文档中,便于后续的词频统计以词云的制作 (5)将最终的分词结果与词性标注结果存储到csv文件中 2.词频统计 3.词云制作 首先利用conda安装wordcloud 最简单的入门案例: 效果图: 我的词云案例: 效果图: 参考文献:https://www.cnblogs.com/wkfvawl/p/11585986.html 4.TF-IDF 关键词提取 Python之酒店评论分词、词性标注、TF-IDF、词频统计、词云 标签:eve 词性标注 dcl type image from 装修 oss min 原文地址:https://www.cnblogs.com/luckyplj/p/13199336.html# coding:utf-8

import pandas as pd

import jieba.posseg as pseg

import jieba

import time

from jieba.analyse import *

df=pd.read_csv(‘csvfiles/hotelreviews_after_filter_utf.csv‘,header=None) #hotelreviews50_1.csv文件与.py文件在同一级目录下

#在读数之后自定义标题

columns_name=[‘mysql_id‘,‘hotelname‘,‘customername‘,‘reviewtime‘,‘checktime‘,‘reviews‘,‘scores‘,‘type‘,‘room‘,‘useful‘,‘likenumber‘]

df.columns=columns_name

df[‘review_split‘]=‘new‘ #创建分词结果列:review_split

df[‘review_pos‘]=‘new‘ #创建词性标注列:review_pos

df[‘review_split_pos‘]=‘new‘ #创建分词结果/词性标注列:review_split_pos

# 调用jieba分词包进行分词

def jieba_cut(review):

review_dict = dict(pseg.cut(review))

return review_dict

# 创建停用词列表

def stopwordslist(stopwords_path):

stopwords = [line.strip() for line in open(stopwords_path,encoding=‘UTF-8‘).readlines()]

return stopwords

# 获取分词结果、词性标注结果、分词结果/分词标注结果的字符串

def get_fenciresult_cixin(review_dict_afterfilter):

keys = list(review_dict_afterfilter.keys()) #获取字典中的key

values = list(review_dict_afterfilter.values())

review_split="/".join(keys)

review_pos="/".join(values)

review_split_pos_list = []

for j in range(0,len(keys)):

review_split_pos_list.append(keys[j]+"/"+values[j])

review_split_pos=",".join(review_split_pos_list)

return review_split,review_pos,review_split_pos

stopwordslist=stopwordslist("stopwords_txt/total_stopwords_after_filter.txt")

# review="刚刚才离开酒店,这是一次非常愉快满意住宿体验。酒店地理位置对游客来说相当好,离西湖不行不到十分钟,离地铁口就几百米,周围是繁华商业中心,吃饭非常方便。酒店外观虽然有些年头,但里面装修一点不过时,我是一个对卫生要求高的,对比很满意,屋里有消毒柜可以消毒杯子,每天都有送两个苹果。三楼还有自助洗衣,住客是免费的,一切都干干净净,服务也很贴心,在这寒冷的冬天,住这里很温暖很温馨"

#分词与词性标注

def fenci_and_pos(review):

#01 调用jieba的pseg同时进行分词与词性标注,返回一个字典 d = {key1 : value1, key2 : value2 }

review_dict= jieba_cut(review)

# print(review_dict)

# 02 停用词过滤

review_dict_afterfilter = {}

for key, value in review_dict.items():

if key not in stopwordslist:

review_dict_afterfilter[key] = value

else:

pass

# print(review_dict_afterfilter)

#03 获取分词结果、词性标注结果、分词+词性结果

review_split, review_pos,review_split_pos = get_fenciresult_cixin(review_dict_afterfilter)

return review_split,review_pos,review_split_pos

def fenci_pos_time(start_time, end_time):

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

# fenci_and_pos(review)

# jieba.load_userdict(‘stopwords_txt/user_dict.txt‘) #使用用户自定义的词典

start_time = time.time()

review_count=0

txt_id = 1

for index,row in df.iterrows():

reviews=row[‘reviews‘]

review_split, review_pos, review_split_pos=fenci_and_pos(reviews)

# print(review_split)

# print(review_pos)

# print(review_split_pos)

review_mysql_id=row[‘mysql_id‘]

print(review_mysql_id) #输出当前分词的评论ID

df.loc[index,‘review_split‘]=review_split

df.loc[index,‘review_pos‘]=review_pos

df.loc[index,‘review_split_pos‘]=review_split_pos

#review_split 将分词结果逐行写入txt文档中

if review_count:

review_count+=1 #计数+1

review_split_txt_path = ‘split_result_txt/split_txt_‘ + str(txt_id) + ‘.txt‘

f = open(review_split_txt_path, ‘a‘, encoding=‘utf-8‘)

f.write(‘\n‘ + review_split)

f.close()

else:

txt_id+=1

review_count=0

review_split_txt_path = ‘split_result_txt/split_txt_‘ + str(txt_id) + ‘.txt‘

f = open(review_split_txt_path, ‘a‘, encoding=‘utf-8‘)

f.write(‘\n‘ + review_split)

f.close()

df.to_csv(‘csvfiles/hotelreviews_fenci_pos.csv‘, header=None, index=False) # header=None指不把列号写入csv当中

# 计算分词与词性标注所用时间

end_time = time.time()

fenci_mins, fenci_secs = fenci_pos_time(start_time, end_time)

print(f‘Fenci Time: {fenci_mins}m {fenci_secs}s‘)

print("hotelreviews_fenci_pos.csv文件分词与词性标注已完成")

#词频统计函数

def wordfreqcount(review_split_txt_path):

wordfreq = {} # 词频字典

f = open(review_split_txt_path, ‘r‘, encoding=‘utf-8‘) #打开分词结果的txt文件

review_split = ""

#逐行读取文件,将读取的字符串用/切分,遍历切分结果,统计词频

for line in f.readlines():

review_words = line.split("/")

keys = list(wordfreq.keys())

for word in review_words:

if word in keys:

wordfreq[word] = wordfreq[word] + 1

else:

wordfreq[word] = 1

word_freq_list = list(wordfreq.items())

word_freq_list.sort(key=lambda x: x[1], reverse=True)

return word_freq_list

#设置分词结果保存的txt路径

txt_id = 1

review_split_txt_path = ‘split_result_txt/split_txt_‘ + str(txt_id) + ‘.txt‘

word_freq_list=wordfreqcount(review_split_txt_path)

#输出词频前10的词汇及其出现频次

for i in range(10):

print(word_freq_list[i])

conda install -c conda-forge wordcloud

import wordcloud

# 构建词云对象w,设置词云图片宽、高、字体、背景颜色等参数

w = wordcloud.WordCloud(width=1000,height=700,background_color=‘white‘,font_path=‘msyh.ttc‘)

# 调用词云对象的generate方法,将文本传入

w.generate(‘从明天起,做一个幸福的人。喂马、劈柴,周游世界。从明天起,关心粮食和蔬菜。我有一所房子,面朝大海,春暖花开‘)

# 将生成的词云保存为output2-poem.png图片文件,保存到当前文件夹中

w.to_file(‘output2-poem.png‘)

import jieba

import wordcloud

# 导入imageio库中的imread函数,并用这个函数读取本地图片,作为词云形状图片

import imageio

mk = imageio.imread("pic/qiqiu2.png")

# 构建并配置词云对象w

w = wordcloud.WordCloud(

max_words=200, # 词云显示的最大词数

background_color=‘white‘,

mask=mk,

font_path=‘msyh.ttc‘, #字体路径,文件中没有(应该是无效设置)

)

#设置分词结果保存的txt路径

txt_id = 1

review_split_txt_path = ‘split_result_txt/split_txt_‘ + str(txt_id) + ‘.txt‘

f = open(review_split_txt_path, ‘r‘, encoding=‘utf-8‘)

string=""

for line in f.readlines():

string+=line

print(string)

# 将string变量传入w的generate()方法,给词云输入文字

w.generate(string)

# 将词云图片导出到当前文件夹

w.to_file(‘output5-tongji.png‘)

import jieba

txt_id=1

review_split_txt_path=‘split_result_txt/split_txt_‘+str(txt_id)+‘.txt‘

f = open(review_split_txt_path, ‘r‘,encoding=‘utf-8‘)

review_split=""

for line in f.readlines():

review_split+=line

print("review_split:"+review_split)

# test_reviews="刚刚才离开酒店,这是一次非常愉快满意住宿体验。"

# review_split, review_pos, review_split_pos=fenci_and_pos(test_reviews)

# print(review_split)

keywords = jieba.analyse.extract_tags(review_split,topK = 10, withWeight = True)

print(‘【TF-IDF提取的关键词列表:】‘)

print(keywords) #采用默认idf文件提取的关键词

文章标题:Python之酒店评论分词、词性标注、TF-IDF、词频统计、词云

文章链接:http://soscw.com/index.php/essay/81638.html